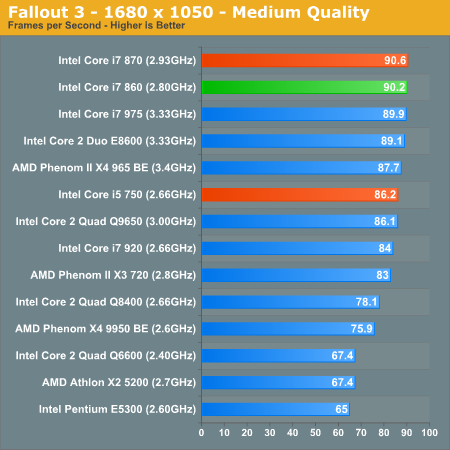

Fallout 3 Game Performance

Bethesda’s latest game uses an updated version of the Gamebryo engine (Oblivion). This benchmark takes place immediately outside Vault 101. The character walks away from the vault through the Springvale ruins. The benchmark is measured manually using FRAPS.

Gamers would be hard pressed to notice a difference between the Core i5 750 and the 860, and definitely not between the 860 and 870. The two are nearly equals here.

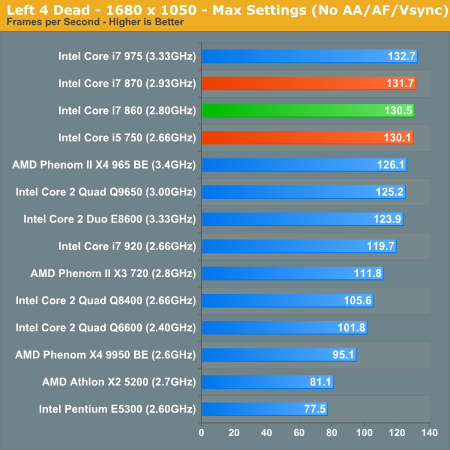

Left 4 Dead

Zombies? Check. Zombie killing performance:

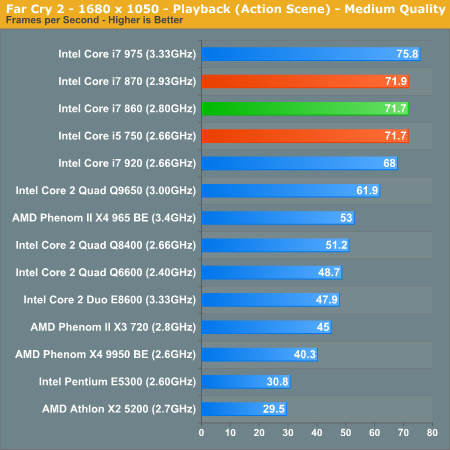

FarCry 2 Multithreaded Game Performance

FarCry 2 ships with the most impressive benchmark tool we’ve ever seen in a PC game. Part of this is due to the fact that Ubisoft actually tapped a number of hardware sites (AnandTech included) from around the world to aid in the planning for the benchmark.

For our purposes we ran the CPU benchmark included in the latest patch:

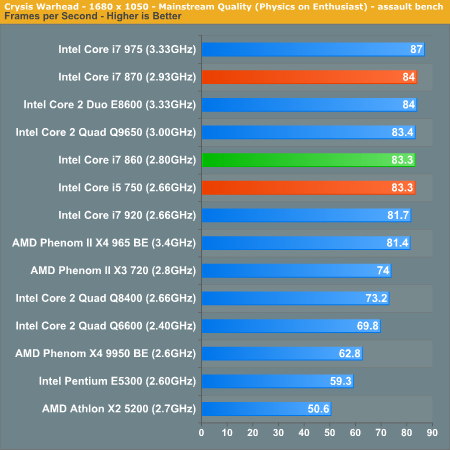

Crysis Warhead

121 Comments

View All Comments

KoolAidMan1 - Monday, September 21, 2009 - link

Ian - In response to:"1. If the 860 and 920 are oc'd to the same clock, presumably with

Turbo off being the most sensible setting, which is faster? How

does power consumption differ? (wrt to total power used for a given

task)

I've still not found a site which has done this comparison. For me,

the comparison data of 860 vs. 920 at stock speeds with Turbo active

is interesting, but not useful."

It has already been done with the i7 870, a CPU that has shown to be marginally faster than the i7 860 in most tests. What follows are benchmarks where the i5 750, i7 870, and i7 920 are run at the 750/920 common clock speed of 2.66ghz and the very attainable turbo speed of the i7 870 of 3.2ghz, no turbo in all cases.

http://www.hardocp.com/article/2009/09/07/intel_ly...">http://www.hardocp.com/article/2009/09/...ntel_lyn...

The difference in nearly all gaming benchmarks, using settings that takes the GPU out of the mix as a bottleneck, all at the same clock speeds, are within a very very tight percentage range, at the very most a 10% spread. With Crysis and Far Cry 2 it is closer to a 1% spread. Multimedia benchmarks have a similar or slightly larger spread but nothing I would consider significant, and certainly not in all cases.

Most things being the same (at least in games which is what I mainly use my PC for anyways, work and everything else are done on my OS X machines), the LGA 1156 CPUs are more appealing to me due to their lower net cost and comparable performance levels relative to consumer level LGA 1366 CPUs and boards.

Eisenfaust - Monday, September 21, 2009 - link

Some comments. Intel claims having split the market in Highend, Midrange and Lowend. 'Lynnfield' XEONs inherited the IMC from the highend X5570 type DP-XEON CPUs, outperforming the LGA1366 single-socket XEON W35XX CPUs either by the usability of RDIMMs (up to 8GB per module!) and speed (DDR3-1333 oficiallay supported with 1 DIMM per channel). And, not to mention, the higher NB clock of 2.40 GHz instead of 2.13 GHz.Several tests with highly parallel code for numerical modelling showed, that a XEON W3550 outperforms a XEON 3470, IF we lift NB clock to 2.66 GHz as used for the W3580 type AND also use DDR3-1333 memory. Well, if someone is looking for a small midrange fast server box also utilising GPGPU AND maybe a fast SAS RAID card (PCIe 2.0), one will be better with X58-Tylersburg platform than 3410 Ibex-Peak. But now look at Intels harassment: High end still is top, also in price, low end (Lynnfield) outperforms technically (even IMC) midrange, but midrange still offers advantages, but why spending the same money for a W3550 XEON if someone can obtain more memory (32 GB instead of 24 GB, even if 32 GB is slower) and a more sophisticated IMC? Well, it may be looking to sharp on some technical details, but when XEON X5570 was introduced Q3/2009, this is the same time when W3550 at D0 stepping was introduced, the modern IMC technology has already been market-ready and is now also realised in Lynnfield. Why do we not see LGA1366 mid-range XEON CPUs with all these benefits? The modular design should simply allow those changes. I still prefere the LGA1366 three-channel memory solution because I definitely do have advantages but I'm not willing to spend more than 1000 EUROs for a W/X55XX CPU nor want I spend money for a outdated 'midrange' CPU. And speaking of the NOW, Lynnfield does have some disadvantages, so it is not an alternative to a LGA1366 platform.

I do not understand this policy, there is no clean and straight forward thread.

mapesdhs - Monday, September 21, 2009 - link

Thanks for the reference! Alas, their choice of oc speed is way too

low to be useful in this regard. I would have though at least 3.8 to

be a sensible target, but I guess they wanted a clock that all the

CPUs including the Ph2 could use. What I'd like to know is, assuming

with a good air cooler both the 860 and 920 can run at 4GHz stable,

which is faster for tasks that do use all 4 cores? (ie. Turbo off)

Also, given the use of a good air cooler, what's the highest sensible

oc each CPU can attain? From all I've read, the 920 D0 should be able

to reach higher oc levels (4.2 to 4.3, vs. around 4.0 for the 860?),

but on the other hand the larger power consumption increase with the

920 might mean the 860 is a better buy if the performance is within a

reasonable range. Atm there's no data for this.

Heh, if I was rich I'd buy both systems and report the differences. :D

Thanks for replying!!

Ian.

strikeback03 - Monday, September 21, 2009 - link

Check the other article Anand just posted, seems that with both at 3.8GHz and almost everything equal (except uncore) the Bloomfield is ~3% faster on average. Which doesn't address the question of max OC, but as that is always going to vary by individual processor anyway, they would have to get enough processors to try and get an average for each type.KoolAidMan1 - Monday, September 21, 2009 - link

Ian - No problem. The new i5 and i7 CPUs have just *barely* been out long enough for standard reviews to be published. I'm actually surprised that we got non-turbo "apples-to-apples" benchmarks as it stands. I reckon that we'll see overclocking articles and benchmarks on them soon enough and that your questions will be answered.MouseBTFH - Sunday, September 20, 2009 - link

I've been searching around for updated release information on SATA-3. According to articles on several sites on the web posted around January of this year, SATA-3 was supposed to be available mid-year. It's not out yet, or if it is I can't find any companies/motherboards that support it.I'd really like to see an examination of Lynnfield vs. Bloomfield performance using SATA-3, if/when it becomes available on either or both platforms.

Scheme - Monday, September 21, 2009 - link

The Marvel 6Gb controllers had significant problems and mobo manufacturers pulled them from their initial P55 designs.The Barracuda XT announcement explains where they're at right now.

Wwhat - Sunday, September 20, 2009 - link

The w7 does a better job at 'grouping threads' made me laugh, another way to put it would be 'it sucks at multithreading' then eh.has407 - Sunday, September 20, 2009 - link

No. Look at how virtually any MP-aware OS has done scheduling for decades: try to spread the load equitably over CPU's. That yields the best absolute throughput, and all other things being equal, it always will.But things are not so simple today. The equation is more complex, as throughput/power scaling is non-linear as it tends to come in quantum steps--especially the first step (CPU off vs. CPU on even at minimal clock rate).

Only relatively recently have OS's considered throughput/watt--or more specifically, the relationship (often non-linear) introduced by systems that can dynamically enable/disable processing elements--as a factor in the scheduler's decisions.

In short: "Grouping threads" has nothing to do with whether the OS "sucks at multithreading" (all decent MP-aware OS's are nominally equivalent), but whether it accounts for power/throughput in its scheduling decisions.

OddTSi - Saturday, September 19, 2009 - link

I know the likelihood of anyone reading this post this deep in the comments are slim and the likelihood of it doing any good are even slimmer but I have to point this out.Power Consumption benchmarks are only useful for telling us how much cooling we'll need for a given chip. In which case telling us total system power consumption is rather useless. If that is the intent here, some thought should be put into figuring out a way of at the very least accurately estimating power consumption of the chip.

If the reason for showing system power consumption is to give us some idea of how much it'll cost us to run that particular setup (or how much damage you're doing to the environment if you're of that mindset), then the benchmarks really should be measuring energy consumption. It's like the old days when marketing was done based on CPU frequency, that was only telling us half the picture.

Idle benchmarks won't differ at all between power and energy consumption measurements, but everything off-idle WILL differ. If you tell us that CPU1 uses 100W while at full load converting a video and CPU2 uses 120W it makes people think that CPU1 is 20% more efficient, but that may not necessarily be the case. If CPU1 takes 12 minutes to convert that video and CPU2 takes only 10, then they're equally efficient because they have used the same amount of energy to perform the same task.

What should be done with these non-idle benchmarks is to perform a specific task and measure how much energy was consumed in the process. Report it in watt-seconds or kilowatt-hours or however you want, but just report it in units of energy.

Also, while we're discussing changing to energy consumption, it should also be noted that some of what we do on computers takes a fixed amount of time regardless of computer power (e.g. watching videos/movies, something that almost everyone does) so doing a full-load test on something of that nature would be impossible/useless. But it does present the interesting scenario that, for example playing a Blu-Ray movie, will create varying levels of load (and in turn power usage) on CPUs of different performance and thus despite the fact that it'll always run for the same amount of time there will be a reason to measure the energy consumption of such a scenario.